Hi jacob,

Recently I’ve managed to retrieve the network logs for the error occurred, however, the behaviour now seems to be different where we’re facing intermittent network shutdowns which might occur a few mins after network startup or maybe after a few hours or days.

I’m not sure if the faulting nodes seems to be from the Panasonic drivers or Yaskawa drivers but sometimes it randomly occurs when moving these axes.

Attached is the full network log file starting from when the error is first thrown to its shutdown state:

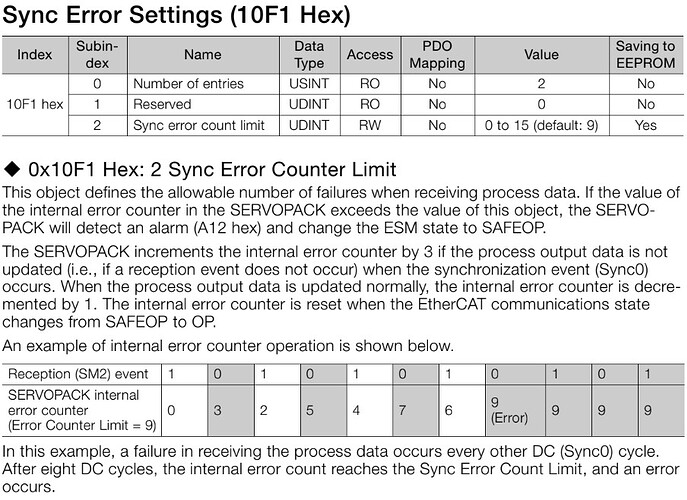

RMPNetwork: /!\ 10:51:45.245 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1100 us

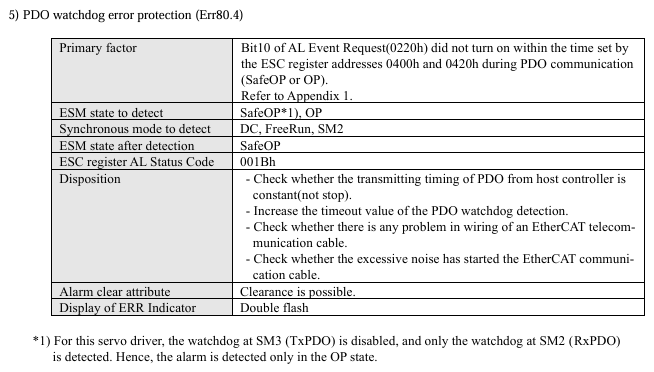

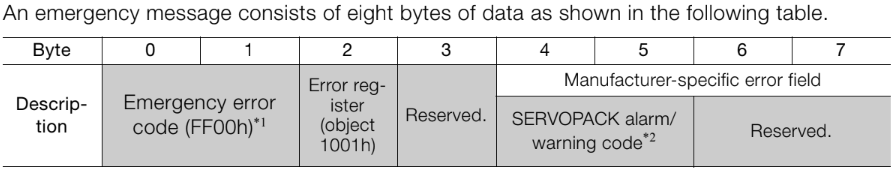

RMPNetwork: /!\ 10:51:45.877 EtherCAT EcSlave.cpp:2126 'Drive 0 (Panasonic Minas 6)' (1001): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:45.934 EtherCAT EcSlave.cpp:2126 'Drive 1 (Panasonic Minas 6)' (1002): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:45.992 EtherCAT EcSlave.cpp:2126 'Drive 2 (Panasonic Minas 6)' (1003): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:46.060 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1141 us

RMPNetwork: /!\ 10:51:46.374 EtherCAT EcSlave.cpp:2126 'Drive 13 (Panasonic Minas 6)' (1014): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:46.432 EtherCAT EcSlave.cpp:2126 'Drive 14 (Panasonic Minas 6)' (1015): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:46.489 EtherCAT EcSlave.cpp:2126 'Drive 15 (Panasonic Minas 6)' (1016): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:46.579 EtherCAT EcSlave.cpp:2126 'Drive 17 (Panasonic Minas 6)' (1018): CoE - Emergency (Hex: 0000, 00, '00 00 00 00 00').

RMPNetwork: /!\ 10:51:46.690 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1098 us

RMPNetwork: /!\ 10:51:47.380 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1101 us

RMPNetwork: /!\ 10:51:48.570 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1251 us

RMPNetwork: /!\ 10:51:49.569 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 1149 us

RMPNetwork: /!\ 10:51:51.340 EtherCAT EtherCAT.cpp:3135 Last cyclic frame was 4399 us

RMPNetwork: (i) 10:51:51.378 EtherCAT YTime.cpp:139 Calculated Clock Adjustment (697) too high, clamping to 345

RMPNetwork: (i) 10:51:51.398 EtherCAT YTime.cpp:139 Calculated Clock Adjustment (596) too high, clamping to 517

RMPNetwork: /!\ 10:51:51.401 EtherCAT EcDcMaster.cpp:912 1 working counter failure. WC = 0, expected 1. cmd=Logical Write (LWR)

RMPNetwork: /!\ 10:51:51.401 EtherCAT EcDcMaster.cpp:912 2 working counter failure. WC = 0, expected 1. cmd=Logical Write (LWR)

RMPNetwork: /!\ 10:51:51.401 EtherCAT EcDcMaster.cpp:912 3 working counter failure. WC = 0, expected 1. cmd=Logical Write (LWR)

RMPNetwork: (X) 10:51:51.401 EtherCAT EcDcMaster.cpp:935 Abnormal response of slaves to cyclic commands. Please, check number and state of slaves.

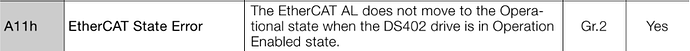

RMPNetwork: /!\ 10:51:51.403 EtherCAT EcSlave.cpp:2126 'Drive 6 (Yaskawa)' (1007): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.403 EtherCAT EcSlave.cpp:2126 'Drive 11 (Yaskawa)' (1012): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.403 EtherCAT EcSlave.cpp:2126 'Drive 16 (Yaskawa)' (1017): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1001) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1002) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1003) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1004) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1005) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1006) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1007) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1008) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1009) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1010) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1011) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1012) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1013) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.403 EtherCAT EcSlave.cpp:545 Node Addr (1014) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.406 EtherCAT EcSlave.cpp:545 Node Addr (1015) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.406 EtherCAT EcSlave.cpp:545 Node Addr (1016) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.406 EtherCAT EcSlave.cpp:545 Node Addr (1017) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.407 EtherCAT EcSlave.cpp:545 Node Addr (1018) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.407 EtherCAT EcSlave.cpp:545 Node Addr (1019) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.407 EtherCAT EcSlave.cpp:545 Node Addr (1020) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.408 EtherCAT EcSlave.cpp:545 Node Addr (1021) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.408 EtherCAT EcSlave.cpp:545 Node Addr (1022) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.409 EtherCAT EcSlave.cpp:545 Node Addr (1023) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.409 EtherCAT EcSlave.cpp:545 Node Addr (1024) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.409 EtherCAT EcSlave.cpp:545 Node Addr (1025) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.410 EtherCAT EcSlave.cpp:545 Node Addr (1026) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.410 EtherCAT EcSlave.cpp:545 Node Addr (1027) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.411 EtherCAT EcSlave.cpp:545 Node Addr (1028) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.411 EtherCAT EcSlave.cpp:545 Node Addr (1029) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.411 EtherCAT EcSlave.cpp:545 Node Addr (1030) : AL Status (0x8), Code (0x0)

RMPNetwork: (i) 10:51:51.412 EtherCAT EcSlave.cpp:545 Node Addr (1031) : AL Status (0x8), Code (0x0)

RMPNetwork: /!\ 10:51:51.412 EtherCAT EcSlave.cpp:2126 'Drive 7 (Yaskawa)' (1008): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.413 EtherCAT EcSlave.cpp:2126 'Drive 12 (Yaskawa)' (1013): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.415 EtherCAT EcSlave.cpp:2126 'Drive 3 (Yaskawa)' (1004): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.415 EtherCAT EcSlave.cpp:2126 'Drive 8 (Yaskawa)' (1009): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.415 EtherCAT EcSlave.cpp:2126 'Drive 18 (Yaskawa)' (1019): CoE - Emergency (Hex: ff00, 01, '00 12 0a 00 00').

RMPNetwork: /!\ 10:51:51.415 EtherCAT EcSlave.cpp:2126 'Drive 4 (Yaskawa)' (1005): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.416 EtherCAT EcSlave.cpp:2126 'Drive 9 (Yaskawa)' (1010): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.416 EtherCAT EcSlave.cpp:2126 'Drive 19 (Yaskawa)' (1020): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.417 EtherCAT EcSlave.cpp:2126 'Drive 5 (Yaskawa)' (1006): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.417 EtherCAT EcSlave.cpp:2126 'Drive 10 (Yaskawa)' (1011): CoE - Emergency (Hex: ff00, 01, '00 11 0a 00 00').

RMPNetwork: /!\ 10:51:51.432 EtherCAT EtherCAT.cpp:769 State changed from Running to StoppingOnError

RMPNetwork: (X) 10:51:51.433 EtherCAT RMPNetworkStarter.cpp:150 Ready to Shutdown

RMPNetwork: (i) 10:51:51.433 EtherCAT RMPNetworkStarter.cpp:184 Main Network Loop finished

RMPNetwork: (X) 10:51:51.558 EtherCAT RMPNetworkFirmware.cpp:1804 Failed to get Service Channel semaphore

RMPNetwork: (i) 10:51:51.558 EtherCAT RMPNetworkFirmware.cpp:1484 Exiting ServiceChannel Thread

RMPNetwork: /!\ 10:51:52.995 EtherCAT EtherCAT.cpp:769 State changed from StoppingOnError to Error

RMPNetwork: /!\ 10:51:55.183 EtherCAT EtherCAT.cpp:3978 --> Close driver

May I know what might be the root cause for this and what does the CoE - Emergency (Hex) codes return as the driver side just returns a general “EtherCAT communication was not in Operational state” alarm/error

or

What are some things that we could try on to mitigate this issue or prevent it from occuring?

Your help is very much appreciated. Thank you!